How Scoring and Incentives Work in Desearch (Beginner Friendly Guide)

How Scoring and Incentives Work in Desearch (Beginner Friendly Guide)

Desearch is a decentralized search engine where contributors, called miners, compete to deliver the best search results. Their work is evaluated by validators using a scoring system based on relevance, timeliness, source quality, and summary accuracy. High-performing miners earn rewards in TAO tokens, while underperformers risk penalties or removal. Validators ensure fairness by testing outputs with queries and assigning scores that determine rewards. The system promotes continuous improvement through competition, driving higher-quality results over time. Powered by Bittensor, Desearch operates transparently, with all processes logged on an open blockchain.

Key Points:

- Miners gather data and generate search results.

- Validators test and score results using strict criteria.

- Rewards are distributed based on performance, incentivizing quality.

- Poor performance leads to penalties or deregistration.

- The system ensures fairness and improvement through competition.

Desearch's open-source framework encourages transparency and equal opportunity for participants.

The Basic Idea

The Basic Idea

The Evaluation Loop

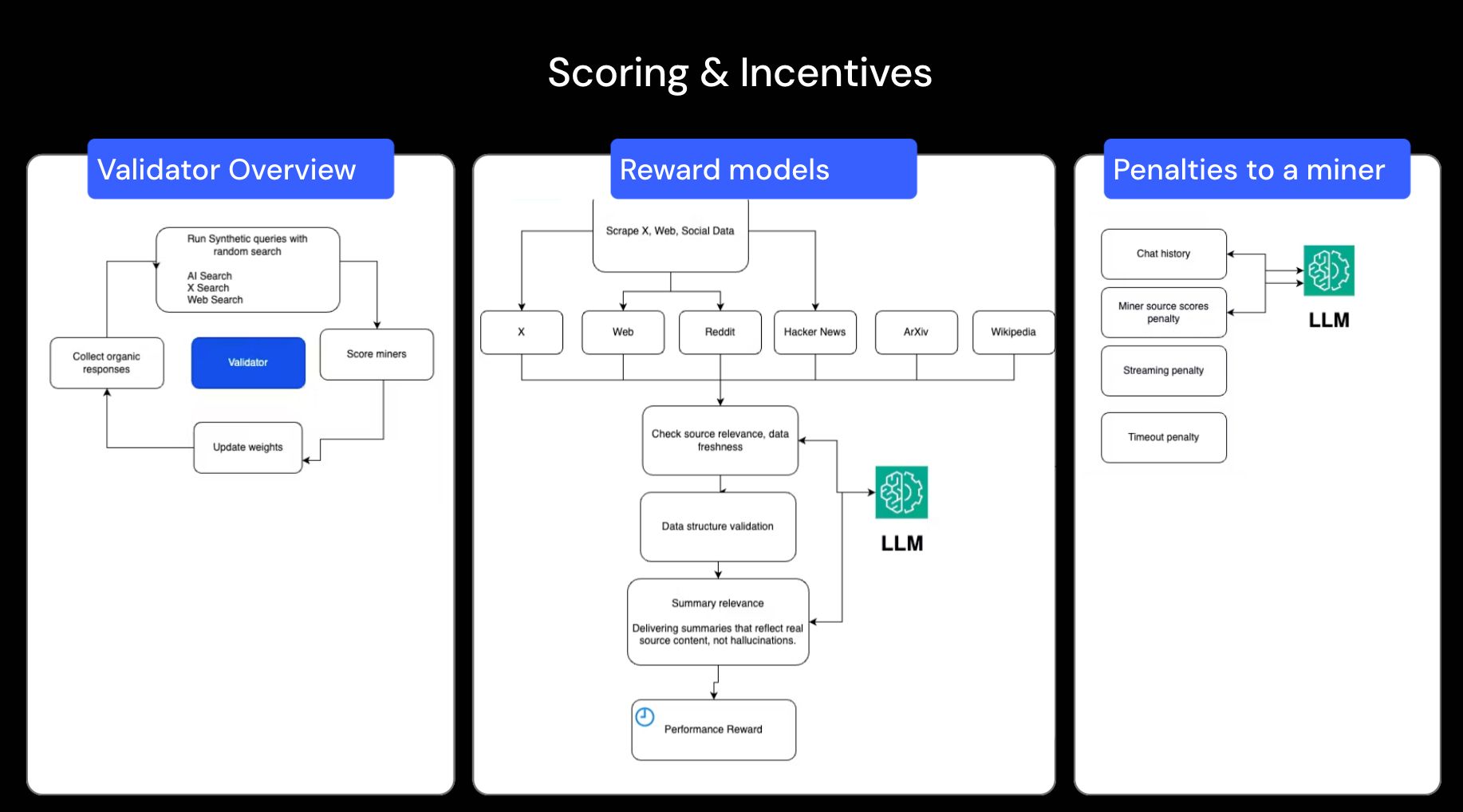

The process kicks off when a validator sends out a query to miners. Miners respond by tapping into various online data sources, using APIs and indexing tools to gather information. They then create a concise summary of the most relevant data and send their findings back to the validator.

Validators take these responses and use Large Language Models (LLMs) to assess them. They evaluate whether the answers address the query, verify the timeliness of the data, and ensure the summaries accurately reflect the original sources. After scoring the responses, validators submit their evaluations - referred to as weights - to the Bittensor blockchain.

How Quality Improves Over Time

The scoring process fuels a system designed to enhance quality over time. By rewarding better performance, the network creates a continuous improvement cycle, described in the Bittensor documentation as a "virtuous cycle". To maintain or increase their earnings, miners must consistently refine their results.

This improvement is further reinforced by a deregistration policy. Miners with the lowest scores are removed from the network, making space for new participants. Even top-performing miners face the risk of deregistration if they are outperformed. As noted in the Desearch documentation:

E063 :: SN22 Desearch :: Decentralized Real-Time Search API for AI Agents

Who Is Involved

Desearch operates with two essential roles: one focuses on gathering and processing data, while the other ensures quality by testing and scoring it. Let's dive into how these roles function within Desearch.

Data Providers (Miners)

Miners aren't crypto miners - they don't solve cryptographic puzzles or validate blockchain transactions. Instead, they gather data by scraping websites, calling APIs, and indexing content from sources like X (formerly Twitter), Reddit, Arxiv, and the broader web. Using this data, miners work with language models to create query-specific summaries.

Their job involves optimizing API calls to platforms like Google, YouTube, and Twitter to retrieve the most relevant information. It's a competitive process. Miners with lower performance scores risk being removed from the network altogether. Their efficiency and accuracy directly affect their scores and rewards.

Validators

Validators play a critical role in maintaining quality. They test the outputs generated by miners by sending both synthetic and real user queries, then evaluate the responses. Using large language models, they check whether the answers are relevant, up-to-date, and accurately summarize the original data.

After scoring the miners' outputs, validators submit their evaluations - referred to as weights - to the Bittensor blockchain. These weights determine the rewards miners receive.

How Validators Test Miners

Validators play a critical role in ensuring miner performance meets high standards. They achieve this by sending queries, collecting responses, and analyzing those responses based on strict criteria. This process helps identify miners who consistently deliver reliable and valuable results.

Synthetic vs. Organic Queries

To test miners effectively, validators rely on two main types of queries: synthetic queries and organic queries.

- Synthetic queries are created by the validator's internal systems to test specific technical behaviors. These controlled tests measure factors like speed, adherence to protocols, and the structure of outputs. One key advantage of synthetic queries is their ability to prevent miners from gaming the system by precomputing or caching results.

- Organic queries, on the other hand, are designed to reflect real-world usage patterns. These mimic how actual users interact with the search system, ensuring that miners are not just technically proficient but also capable of meeting practical needs.

Both types of queries are essential. Synthetic queries establish a consistent benchmark for fair evaluations, while organic queries ensure the system remains aligned with its ultimate purpose - delivering relevant, high-quality, real-time search results that users can depend on.

What Validators Measure

Validators assess miner responses across multiple performance dimensions. In the Desearch system, this evaluation is divided into three main categories: Twitter Scoring (50%), Summary Scoring (40%), and Search Scoring (10%).

- Twitter Scoring: Miners submit 10 relevant links, which are then assessed for their depth and relevance to the query.

- Summary Scoring: This measures how well the AI-generated summary addresses the original prompt, focusing on clarity, precision, and completeness.

- Search Scoring: Validators evaluate the relevance of web links to the keywords and themes of the query.

How Responses Are Scored

When responses are submitted, they are evaluated using three weighted scoring categories: Twitter Scoring (50%), Summary Scoring (40%), and Search Scoring (10%). Each category assesses a different aspect of quality, and together they determine the rewards miners earn. These scoring criteria align with the broader evaluation process discussed earlier.

Relevance and Freshness

Validators use LLMs to judge whether a response directly addresses the query. For Twitter-based responses, it's essential to include the required number of links that directly answer the question. If the links are only loosely related or fail to address critical parts of the query, the relevance score drops significantly. Freshness also plays a major role - Desearch prioritizes real-time data, especially from platforms like X and Reddit.

Source Quality

The reliability of the sources miners provide is another critical factor. Validators assess whether the sources are trustworthy and genuinely useful. For Twitter links, they check if the content offers meaningful insights rather than shallow observations. For web search results, they evaluate how well the links align with the query's keywords and themes. Poor-quality sources, like spam or overly generic content, result in lower scores. Desearch adopts a "winner-take-all" approach, where miners who consistently provide high-quality sources earn rewards, while those submitting subpar data face the risk of being deregistered.

Output Structure

Well-structured, developer-friendly outputs are crucial because Desearch is designed to serve AI agents and applications that depend on clean, predictable data formats via the Desearch API. Validators assess how organized and ready-for-use the responses are. Missing fields, inconsistent formatting, or broken data structures lead to lower scores, as they create challenges for downstream processes.

Summary Accuracy

After evaluating structure and source quality, validators focus on the accuracy of miner-generated summaries. They use LLMs to ensure the summaries accurately reflect the original content, assessing them for depth, clarity, and precision. Summaries that include fabricated details or misleading statements are penalized.

This rigorous focus on accuracy ensures users can rely on the information provided without having to verify every detail themselves.

Performance and Rewards

How Rewards Work

In Desearch, rewards come in the form of TAO tokens, and how much you earn depends on how well you perform compared to other miners. Validators play a key role here by assigning weights to each miner based on their scores. These weights determine your share of the total rewards pool.

Validators are constantly at work, calculating weight vectors from recent performance and updating them on-chain. At the end of each tempo (which equals 360 blocks or about 72 minutes), the Yuma Consensus module finalizes the reward distribution. This process factors in both the calculated weights and the stakes of the validators. This setup ensures that no single validator can manipulate the system, keeping things fair for everyone.

Penalties Explained Simply

Just as rewards encourage excellence, penalties are there to uphold high standards. If your performance slips, penalties will lower your scores, which directly impacts both your rewards and your standing in the network. Validators impose penalties for several common issues:

- Irrelevant or low-quality results: If your Twitter links don't address the query or your summaries include inaccurate or fabricated information, your scores will take a hit.

- Slow response times: Delays in returning results also lead to lower scores, as speed is a critical factor.

- Structural errors: Mistakes like missing fields or broken formatting disrupt AI data processing and result in reduced scores.

In short, the system is designed to reward excellence while ensuring that the network stays reliable by weeding out underperformers.

Why Competition Matters

How Miners Are Compared

In the world of Desearch, miners aren't judged in isolation. Instead, validators assess their performance across numerous queries and over extended periods. Using weight vectors, validators rank miners, creating a weight matrix. This matrix is then fed into the Yuma Consensus algorithm, which ultimately determines the rewards miners receive.

Here's the kicker: when one miner enhances their game - maybe through more efficient Twitter API calls or delivering sharper summaries - it raises the bar for everyone. This ripple effect improves the overall network quality, pushing all participants to keep refining their performance. It's a competitive ecosystem that highlights the differences between decentralized and traditional centralized systems.

Decentralized vs. Centralized Systems

This competitive framework sets Desearch apart from centralized models. Traditional search engines operate under the control of a single company. That company decides what defines quality, how algorithms function, and who gets access. This monopolized approach often leads to stagnation because there's little external pressure to innovate or improve.

Desearch flips the script. Here, independent validators evaluate miners based on real performance rather than corporate agendas.

Desearch thrives on what it calls "equality of opportunity." This means that anyone equipped with the right hardware and expertise can compete. Smaller players, rather than being sidelined by corporate giants, can carve out profitable niches within the network's reward system. The diversity of expertise among miners ensures the network remains dynamic and constantly improving.

How This Relates to Bittensor

How Responsibilities Are Divided

Bittensor provides the backbone for decentralized operations, ensuring a well-organized framework where roles are clearly defined. Within this system, Desearch operates as Subnet 22, relying on Bittensor's blockchain as the core for all its activities. This setup ensures no single participant has control over the entire system, keeping things fair and balanced.

Here's how it works: miners handle the heavy lifting by gathering data, interacting with APIs, and producing search results. Meanwhile, validators independently assess these results and assign quality scores. These scores, stored as weight vectors on the blockchain, are processed using the Yuma Consensus algorithm to finalize rewards every tempo (which equals 360 blocks). This separation of tasks ensures decentralization at every level - miners can't manipulate their own scores, and validators don't directly control payouts.

Now, let's dive into the specifics of how Bittensor supports this decentralized process.

What Bittensor Provides

Bittensor sets the stage for decentralized evaluation with a metagraph - a global directory that tracks Subnet 22's miners and validators along with their performance metrics. This system is designed to manage up to 256 unique identifier slots, using a pruning process to phase out underperforming nodes.

Communication between validators and miners is streamlined through the Axon-Dendrite protocol and Synapse data objects. To ensure fairness, new miners are granted an immunity period of about 4,096 blocks (roughly 13.7 hours), giving them time to improve their contributions without the risk of being immediately removed.

Bittensor also encourages honest participation by aligning rewards with consensus. Validators who produce evaluations that match the consensus receive higher rewards, while those who deviate see reduced emissions. This approach ensures that maintaining high-quality standards benefits everyone, discouraging any attempts to game the system.

Want to Go Deeper?

Explore the Code

If you're looking to dive into the technical side of things, the complete codebase for Desearch offers a thorough look into its inner workings.

The project is entirely open source. You can find the full codebase for Desearch (Subnet 22) on GitHub at https://github.com/Desearch-ai/subnet-22. This repository lays out exactly how validators generate queries, score responses, and calculate rewards.

The repository is structured to make navigation simple. For example:

- The

neurons/directory contains the core scripts for validators and miners. - The

desearch/folder houses the logic for evaluation.

These incentives are not theoretical. Developers can actively participate in the network. In the next guide, we walk through how to become a data provider miner on Desearch.

Final Thoughts

Scoring and incentives form the backbone of Desearch, creating a system where quality is measurable and improvement is constant.

The beauty of this model lies in its simplicity: miners who provide relevant, well-structured, and up-to-date data earn higher rewards, while those who fall behind risk being phased out. Instead of relying on centralized oversight, this system encourages competition and uses clear metrics to maintain quality. The result? Continuous improvement and progress across the network.

The reward structure offers miners a clear path to success: focus on strong engineering and consistent performance. The steep incentive curve means top-performing miners earn significantly more, while small lapses in quality can lead to notable losses. There's no room for shortcuts here - validators regularly update their scoring models, ensuring that only reliable systems delivering consistent, high-quality results thrive.